Why this unit

Proofreading is the scientific QC layer that determines whether downstream analyses are trustworthy.

Learning goals

- Classify and correct merge/split/boundary errors.

- Tie corrections to explicit quality metrics and logs.

Core technical anchors

- Metrics: VI, edge precision/recall, ERL, synapse-centric F1.

- Priority strategy for high-impact error correction.

- Human-machine workflow separation: discovery, adjudication, finalization.

Method deep dive: production proofreading loop

- Candidate triage:

Rank errors by estimated downstream impact (edge loss, motif distortion, cell identity risk).

- Local correction:

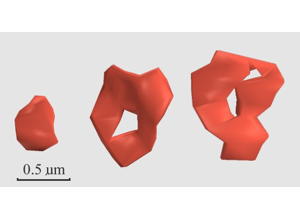

Resolve merge/split/boundary errors with 2D/3D contextual validation.

- Global consistency:

Recheck branch continuity and synaptic partner plausibility.

- Metric update:

Recompute targeted QC metrics after each correction batch.

- Release gate:

Promote only segments that pass predefined quality thresholds.

Recommended QC thresholding strategy

- Use block-level dashboards for VI and edge precision/recall, not just whole-volume means.

- Track ERL by cell class to detect morphology-dependent blind spots.

- Maintain synapse-centric precision/recall for biologically relevant correctness.

- Require explicit uncertainty tags for unresolved defects rather than silent acceptance.

Frequent failure modes

- Over-fixing low-impact errors:

Prioritize corrections that materially change downstream conclusions.

- Inconsistent adjudication:

Maintain standard operating examples for merge/split edge cases.

- Metric gaming:

Pair global metrics with qualitative audits of biologically important structures.

- Human-fatigue drift:

Rotate reviewers and monitor disagreement trends over time.

Practical workflow

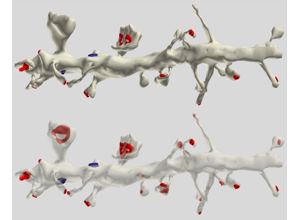

- Detect candidate errors in 2D and 3D context.

- Classify error type (merge, split, boundary ambiguity, identity confusion).

- Correct labels and record decision rationale.

- Validate continuity and synaptic context after correction.

- Log quality metrics for reproducibility and team review.

Visual training set

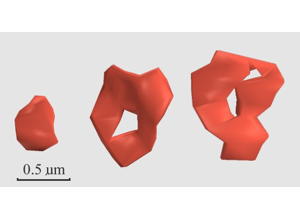

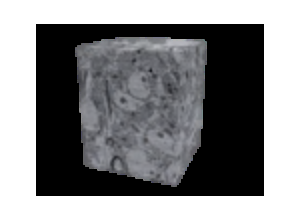

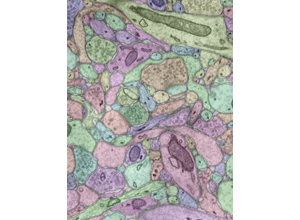

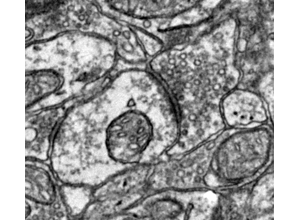

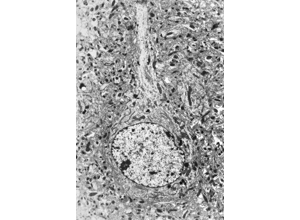

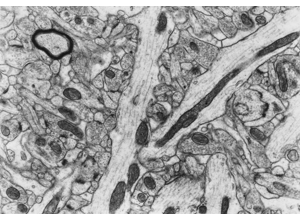

RIV-ULTRA S06: orientation cue for robust proofreading context.

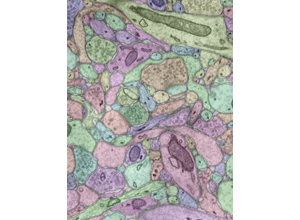

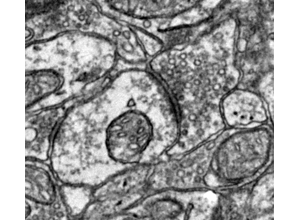

RIV-ULTRA S09: synapse-oriented features relevant to correction decisions.

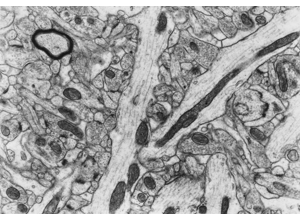

RIV-ULTRA S11: vesicle and organelle cues for ambiguity resolution.

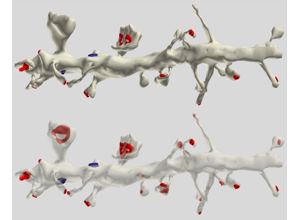

RIV-AXDEN S13: axon-vs-dendrite differentiation for identity checks.

RIV-AXDEN S18: edge-case morphology for high-risk correction review.

RIV-AXDEN S22: advanced cue set for difficult boundary calls.

Module14 L2 S03: method overview context for processing/QC integration.

Module14 L2 S08: graph/pipeline transition context.

Module14 L2 S09: automated detection context for human-machine workflows.

Module14 L2 S10: quality-relevant processing stage.

Module14 L2 S13: evaluation/metrics context for QC reporting.

Attribution: Pat Rivlin training materials for `RIV-*` visuals; outreach visuals from module14 lesson2 extraction. Some planned IDs were unavailable in extracted thumbnails and were replaced with nearest available alternatives.

Discussion prompts

- Which error types most strongly alter downstream network conclusions?

- Where should human review be mandatory, even with strong model performance?

- What QC metadata is minimally required to make proofreading decisions auditable?

Course links

Quick activity

Take one candidate merge/split case and write a short correction log with before/after rationale and one QC metric.

Content library references

- Error taxonomy — Merge, split, boundary, and identity errors in detail

- Proofreading strategies — Exhaustive, targeted, priority-ranked, crowd-sourced approaches

- Proofreading tools — CAVE, Neuroglancer, Spelunker, NeuTu

- Metrics and QA — VI, ERL, edge F1, synapse-centric F1 with formulas

- Proofreading worked examples — Step-by-step correction scenarios

- Provenance and versioning — CAVE materialization and reproducible proofreading

- FlyWire whole-brain connectome — Collaborative proofreading at scale

Teaching slide deck

Evidence pack: papers and datasets

This unit is anchored to canonical papers and datasets used in connectomics practice. Use these as required preparation before activities.

Key papers

Key datasets

Competency checks

- Prioritize proofreading corrections by biological impact.

- Report QC metrics tied to release/no-release decisions.

Capability development brief

Capability target: Run a production-ready proofreading workflow that prioritizes corrections by scientific impact.

Required expertise

- Segmentation scientist (model behavior and failure modes)

- Proofreading operations lead (queueing and throughput strategy)

- Quantitative QC analyst (precision/recall and uncertainty metrics)

Core concepts to teach

- Merge/split taxonomy: Standardized categorization of topological reconstruction errors.

- Impact-weighted triage: Prioritizing corrections that most affect downstream biological conclusions.

- Quality reporting: Translating correction activity into interpretable, reproducible QC metrics.

Studio activity

Proofreading Queue Optimization - Balance correction quality and throughput under limited expert time.

Design and justify a triage strategy for a backlog of mixed-severity errors.

- Classify queue items by error type and scientific impact.

- Define thresholds for immediate correction versus defer.

- Simulate one review cycle and report outcomes.

Expected outputs:

- Queue triage policy

- Cycle metrics summary

Assessment artifacts

- Proofreading SOP with triage rules and escalation criteria.

- QC dashboard definition with required metrics and update cadence.